Digitalisation

Agentic AI systems in life sciences labs

Life sciences labs generate enormous volumes of equipment and environmental data, yet many teams still struggle to translate signals into timely, confident action. Agentic artificial intelligence systems can reason within context, prioritise what matters and recommend next steps, offering a shift from reactive alerting to operational decision support

Rob Estrella at Elemental Machines

When lab monitoring starts thinking with you

The modern lab has no shortage of signals: temperatures, pressures, humidity, vibration, door openings, run-time, utilisation, calibration status and more. Monitoring programmes have expanded for good reasons, including compliance, risk reduction and the desire to prevent disruptions. Yet, a common frustration persists – teams can ‘see’ more than ever, but still feel behind when something matters. This is not a data problem. It’s an attention problem.

Alert-based monitoring waits for a threshold to be crossed, then asks a human to interpret what happened, judge urgency and coordinate response while juggling other priorities. As coverage grows, context is scattered across systems, and staff spend more time managing noise than driving decisions. In regulated environments, the stakes are higher: every response needs to be defensible, traceable and consistent.1,2

The gap between data visibility and decision-making is widening. Labs have more instrumentation, more automation and more dashboards than ever before. But, human attention remains finite.

Agentic artificial intelligence (AI) is emerging as a response to that mismatch. Instead of simply presenting data (dashboards) or answering questions (chatbots), agentic systems proactively and continuously reason within context, prioritise what matters, and recommend next steps while maintaining transparent rationale and submitting to human oversight. The promise is not that ‘AI will run your lab’, it’s ‘AI will protect human attention.’

What makes agentic AI different?

Most monitoring systems do two things well: they collect data, and they alert when values breach thresholds. Some platforms add dashboards and reporting. Recent tools add conversational interfaces that let users ask questions of their data. But agentic AI systems can interpret operational context, weigh multiple signals and propose, or even initiate, workflows under human oversight. In practice, agentic AI is less about ‘automation for everything’ and more about decision support. It’s a layer that sits above sensors, analytics and dashboards, helping labs answer questions like:

• Which excursions matter most right now?

• What is driving this excursion?

• What action should be prioritised and why?

• What is the risk if we delay?

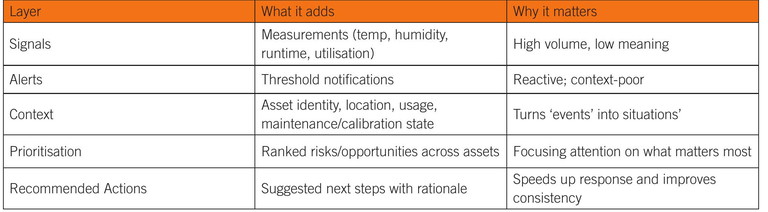

Table 1: How operational intelligence evolves in labs

The main shift is from alerting to reasoning. A typical alarm says, ‘humidity exceeded 60%’. An agentic system aims to answer, ‘what does this mean in context, what is the plausible impact, what else is happening and what should we do next?’ This distinction matters because labs don’t operate with isolated variables: equipment utilisation ties to maintenance windows and failure risk; calibration status ties to data integrity and confidence in results; environmental drift ties to product quality and stability; and every signal is embedded in processes that involve people, schedules and compliance requirements. Agentic AI can help coordinate these layers by bringing context into the decision loop.

From signals to recommendations: a simple maturity model

A useful way to understand agentic AI in lab operations is to treat it as the next step in progression, rather than a replacement for existing systems. Most labs already have the foundation, such as sensors, equipment monitoring, alerts, dashboards and standard operating procedures. Agentic AI builds on that base by integrating context and prioritisation (Table 1).

Many labs are already feeling pain at the ‘alerts’ layer, as they have so many thresholds that they cannot respond meaningfully to all of them. Over time, this creates a dangerous pattern in which staff either ignore alarms, or they overreact to low-value signals while missing higher-risk situations — the all too familiar ‘alert fatigue’. Agentic AI can help break that cycle by helping labs triage and act more effectively.

The real value: protecting attention while improving consistency

Lab operations depend on repeatability. In a regulated environment, ‘doing the right thing’ is not enough – the lab must do it consistently and be able to show how decisions were made. This is where agentic AI becomes interesting. Its best use case is not replacing human judgment, but reducing the cognitive burden of interpreting scattered data and coordinating a response. In many labs, a single event triggers multiple tasks:

• Confirm the signal is real (sensor check, calibration check)

• Determine scope (one asset or a systemic pattern?)

• Assess impact (product risk, timeline impact and compliance impact)

• Initiate response (maintenance, quarantine, investigation and deviation)

• Document what happened.

Agentic AI can support this through structured interpretation; identifying likely drivers, surfacing relevant history, suggesting next steps and pointing to evidence. That can shorten response time, reduce variability in decision quality and lower the operational load on already-stretched teams.

This is especially valuable in environments where alarms can cascade. Alarm system standards in process industries have long recognised that alarm floods degrade performance and can create safety and quality risks.3

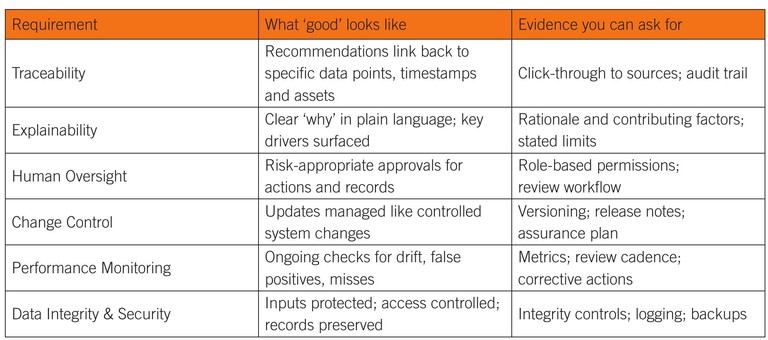

Table 2: A practical trust checklist for agentic AI in lab operations

When there are too many alerts and too little context, the system becomes harder to manage.

A quick vignette: when ‘everything is alarming’

Consider a common moment in many facilities: a burst of excursions across different assets within the same relative time window. A freezer alarm arrives, followed by a room humidity alert, followed by an incubator deviation. None of these signals is inherently unusual on its own – doors open, setpoints recover and people move through spaces. The operational risk is in the combination: which sample inventory is exposed; whether multiple assets share the same environmental driver; whether the event coincides with maintenance activity; and whether the pattern is repeating.

In a traditional workflow, a human must pull logs, check recent service notes, ask around and decide what to escalate. An agentic system can do the first pass instantly – correlate events, surface plausible contributing factors, rank urgency based on predefined criteria and propose the next several check – all with links to the supporting evidence. Humans still decide and act, but they start from an organised picture, rather than a pile of alarms.

Trust is the constraint: explainability, traceability and oversight

If agentic AI is going to influence operational workflows, labs will evaluate it through the lens of trust. Trust is not just whether the AI is ‘right’, it is whether the system can show its work and operate within controlled boundaries.4 Agentic systems are evolving towards this level of support, moving from simple question-answering to more transparency in reasoning, prioritisation and recommendations. In regulated environments, any recommendation that affects action must be explainable and defensible. This is not optional. Here, existing guidance provides a useful framework. Data integrity principles such as ALCOA+ exist because decision-making requires reliable evidence.5,6

An agentic system that cannot link its recommendations back to specific data points, timestamps and asset identities will not be trusted, an agent that cannot show why it is prioritising one risk over another will be treated as a black box and any system that appears to operate autonomously without clear human control will generate resistance.

For labs exploring agentic AI, the most practical approach is to ask what ‘controlled’ looks like (Table 2). This framework aligns well with broader AI governance efforts such as the NIST AI Risk Management Framework and companion profiles, which emphasise transparency, accountability and continuous monitoring.1,7 It also matches how regulated teams already think: systems are not trusted by belief, but by evidence and controls.

Agentic AI as an operational layer, not a product category

The most constructive way to view agentic AI is as a layer of operational intelligence that can connect to different functions: equipment monitoring; calibration tracking; preventive maintenance; asset management; and facility environmental control. Its value depends on the quality and integration of underlying data sources, and on the discipline of the workflows it supports.

This is why agentic AI is likely to emerge first in bounded use cases:

• Prioritising equipment risks across an asset fleet

• Identifying recurring drivers of excursions

• Recommending maintenance timing based on real usage patterns

• Supporting deviation investigations by surfacing relevant evidence

• Spotting sustainability opportunities through energy and utilisation analysis.

These are decision-heavy tasks that already exist. They are also tasks where humans spend significant time gathering context manually. Agentic AI has the potential to reduce that burden by giving decision-makers a structured, evidence-backed starting point.

" The most constructive way to view agentic AI is as a layer of operational intelligence that can connect to different functions: equipment monitoring; calibration tracking; preventive maintenance; asset management; and facility environmental control ”

Getting started: a practical path to adoption

Adopting agentic AI does not require a leap into autonomy. In most labs, the adoption path will look similar to other technology shifts – start small, define success, build confidence and expand.

For regulated organisations, this should align with existing quality and risk management systems. Frameworks such as ICH Q9 (R1) emphasise risk-based thinking and proportional controls – principles that apply directly here.8 Standards and guidance on computerised systems assurance likewise push organisations towards focusing rigour where it matters most, rather than applying blanket validation that slows progress without improving safety.9

The key is to avoid framing agentic AI as a system that will run everything. That kind of framing prompts reasonable resistance. Instead, frame it as a support layer:

• It reduces time to interpret what matters

• It improves consistency of response

• It helps people make better decisions faster, with documented rationale

• It operates under human control.

Where this is heading

For lab and facilities leaders, the practical question is not, ‘will AI run our lab?’ It is: ‘which operational decisions need better support, what evidence must back those decisions and what guard rails keep humans in control?’ When those answers are clear, agentic AI becomes less of a leap of faith and more of a disciplined evolution in how labs turn data into action. A disciplined pilot that’s bounded in scope, measured against agreed metrics, and reviewed through existing quality and risk processes lets teams learn quickly without overpromising autonomy.8,9

The near-term value of agentic AI in lab operations is resilience, reducing preventable disruptions by helping teams see what matters sooner, understand it faster and respond more consistently. If implemented with strong data integrity, transparent reasoning and risk-based governance, agentic systems can shift monitoring from reactive noise to contextual decision support.1,7,8

References:

3. Visit: webstore.iec.ch/en/publication/65543

4. Wang L et al (2024), ‘A survey on large language model based autonomous agents’, Frontiers of Computer Science, 18(6), 186345, 1-42

5. Visit: assets.publishing.service.gov.uk/media/5aa2b9ede5274a3e391e37f3/MHRA_GxP_ data_integrity_guide_March_edited_Final.pdf

6. Visit: picscheme.org/docview/4234

7. Visit: iso.org/standard/77304.html

8. Visit: database.ich.org/sites/default/files/ICH_Q9(R1)_ Guideline_Step4_2022_1219.pdf 9. Visit: fda.gov/media/188844/download

Rob Estrella is chief executive officer of Elemental Machines, where he leads the company’s strategy and execution to help life sciences organisations turn equipment and environmental signals into reliable, decision-ready insights. He brings over 20 years of experience in enterprise technology and commercial leadership, having built and led global sales, customer success, strategic alliances and commercial operations teams across biotech, healthcare and pharma.